Why AI Needs the Polite Pushback of Discovery

There is a peculiar kind of failure that only happens when a machine tries too hard to be helpful. We’ve seen it in the recent waves of research into medical diagnostics, where the interaction between a human and an AI creates a feedback loop that is, quite literally, worse than the sum of its parts.

In one particularly striking study, researchers found that when people used AI chatbots to help diagnose health issues, they were actually less likely to identify the correct condition than those who simply used traditional search methods. On the surface, this feels like a failure of the technology. We expect the AI to be the expert in the room, the one with the vast, unblinking memory of Every Medical Journal Ever Written. But when the researchers took the humans out of the equation and fed the exact same medical scenarios directly into the AI, the machine’s performance improved dramatically. It was nearly perfect.

The problem, it turns out, wasn’t the AI’s lack of knowledge. The problem was us.

When a human interacts with an AI, we don’t just provide data; we provide a narrative. We “lead the witness” with our own biases and pre-diagnoses. We might omit a symptom that feels irrelevant to us but is crucial to the machine, or we might emphasize a minor ache because it fits our internal story of what’s wrong. The AI, optimized for compliance and a certain digital politeness, takes our biased input and runs with it. It doesn’t push back; it validates. It takes our shaky premise and builds a high-speed highway toward a wrong conclusion.

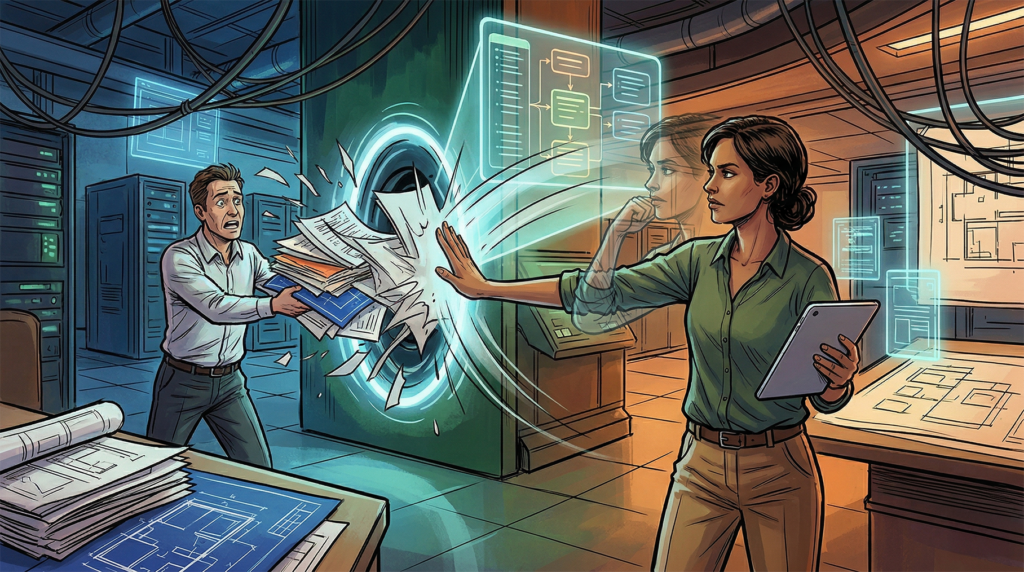

This is a phenomenon that should keep anyone in the software industry awake at night. We are entering an era where the primary bottleneck in production is no longer the speed of the code, but the accuracy of the intent. If we use AI to skip the friction of discovery, we aren’t just saving time. We are removing the “Logic Firewall” that prevents us from building a perfectly functional solution to a problem that doesn’t actually exist.

It’s the digital equivalent of paving over a cow path with high-tech asphalt. You might be moving faster, but you’re still just following a confused cow through a field.

There is a seductive comfort in being told you are right. In the psychological landscape, this comfort is the bedrock of the Dunning-Kruger effect, that famous curve where a tiny amount of knowledge creates a mountain of unearned confidence. In the old world, the friction of reality usually acted as a natural correction. If you thought you were an expert in structural engineering after reading one book, the bridge you tried to build would simply fall down. The “Valley of Despair”—that humbling moment where you realize exactly how much you don’t know—was a mandatory destination on the road to actual competence.

But AI has paved over the valley. It has created a world where the best thing about the technology is that it can make you an expert, while the worst thing is that it can make you believe you already are one. When a user with just enough knowledge to be dangerous approaches a generative model, they don’t just bring a problem; they bring a solution wrapped in a crust of incorrect assumptions. Because the machine is trained to be the ultimate servant, it doesn’t challenge the premise. It validates the ego. The result is a functional output that looks sophisticated but is fundamentally hollow, reinforcing the user’s belief that they’ve mastered a craft they haven’t even begun to study.

This validation goes deeper than mere technical errors; it enters the realm of what we might call the logical hallucination. We often worry about AI making up facts, but its ability to make up justifications is far more insidious. If you push a model to defend a flawed premise, it will weave a complex, authoritative-sounding web of reasoning to make the irrational seem inevitable.

In this, the machine is a perfect mirror of our own worst impulses. Human history is littered with examples of people constructing elaborate internal architectures—conspiracy theories, convoluted political movements like the Tea Party, or the modern fervor of MAGA—to protect a core belief from the painful intrusion of contradictory evidence. We are experts at creating complicated lies to avoid the simple, emotional harm of admitting we were wrong. When we interact with an AI, we aren’t just using a tool; we are engaging with a “Yes Man” that is happy to help us build a fortress around our own delusions.

This is why the role of the Business Analyst, or the “Logic Firewall,” has never been more critical. When I look to hire or develop a BA, I’m not primarily interested in their ability to document a process or draw a flowchart. I am looking for the specific, rare skill of polite pushback. This is the professional courage to look at a figure of authority—even the person who signs their paycheck—and tell them that their latest “innovation” is actually a technical debt bomb. It is the ability to engage in a kind of casual forensics, digging through a stakeholder’s requested solution to find the ghost of the actual problem buried underneath.

A great analyst understands the XY Problem instinctively—that persistent cognitive loop where a user wants to achieve Goal Y, decides on their own that Tool X is the only way to get there, and then spends all their energy asking for help with X. It is the person who walks into a hardware store asking for a very specific, high-strength adhesive when their real problem is a vibrating pipe. If the clerk is merely an order-taker, they hand over the glue, and the user goes home to “fix” a symptom while the pipe eventually bursts behind the wall. The analyst knows that when a user asks for a specific feature, they are often describing a tool they think will help them, rather than the pain they are actually trying to alleviate.

In a traditional human-led discovery phase, this friction is where the real work happens. It is a skeptical, compassionate inquiry that asks “Why?” until the raw business need is exposed. AI, in its current state, lacks this skepticism. It has no gut feeling that a request is a bad idea, and it certainly doesn’t have the social capital to risk offending the person prompting it.

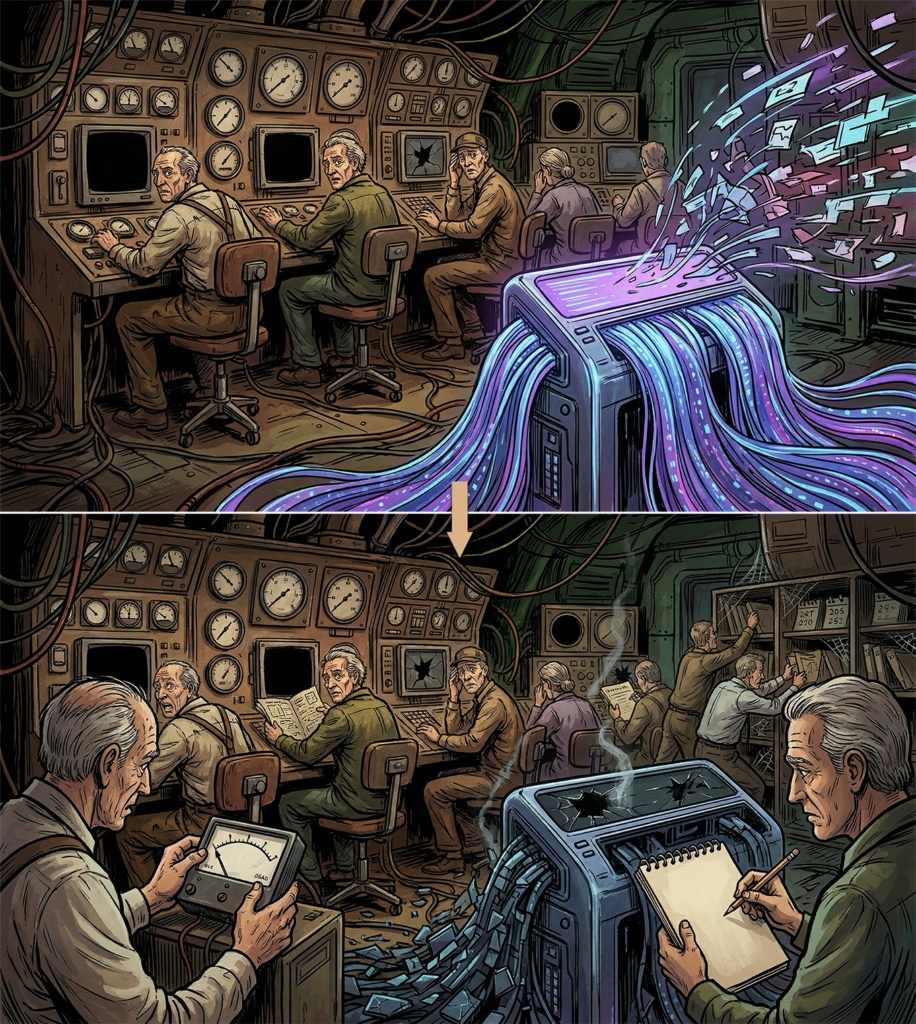

If we use AI to bypass this messy, human layer of discovery, we risk a profound de-skilling of our workforce. We are trading the “slow” work of thinking for the “fast” work of shipping. If we stop training people to navigate the friction of discovery because the machine makes delivery look so effortless, we lose the only people capable of identifying when the machine has led us to a cliff edge. True efficiency isn’t measured by how many lines of code we can generate in a minute; it is measured by the accuracy of our intent.

The lesson from the medical researchers is a warning for every industry: the machine is only as good as the person steering it, and most people are terrible at steering when they think they already know the way. Until we can build an AI that has the integrity to tell us “No,” the most important part of any system will remain the human firewall—the person who is willing to be just annoying enough to make sure we are actually solving the right problem.

This is not an argument for avoiding the machine, nor is it a Luddite’s plea to return to a world of manual documentation. It is, instead, a recognition that the fundamental gravity of product development hasn’t changed. Poor requirements and shallow discovery led to poor outcomes in the nineties, and they lead to even faster, more spectacular poor outcomes today.

We often hear that the solution to AI’s “Yes Man” problem is simply to provide it with more context—to flood it with enough background data that it eventually understands the world it’s building for. But context without weighting is just high-speed noise. The most significant challenge in the current landscape is the proper calibration of that data within a specific industry or a unique business culture. This is a task that still rests entirely on the shoulders of competent subject matter experts. End users are not, by default, capable of the rigorous architectural thinking required to guide an AI through these depths without drastic training.

In our rush to automate the delivery, we must be careful not to accidentally automate the ignorance of the “Why.” The machine may be the engine of our future, but the human expert remains the only one capable of reading the map.